Home - The use of foreign AI solutions in government institutions: a significant security risk, but the solution already exists

The use of foreign AI solutions in government institutions: a significant security risk, but the solution already exists

Artificial intelligence is rapidly establishing itself in both business and the public sector, but it also brings a new, often underestimated risk — the loss of data control. The majority of AI solutions used today operate outside the European Union, which means that even everyday employee actions can have serious consequences. However, according to Renata Špukienė, the head of Tilde IT, this situation is not a dead end — a technological solution already exists that allows even the most sensitive institutions to use artificial intelligence safely.

Data often leaves the organisation without anyone even realising it

In the public sector, AI tools often appear not as a strategic decision, but as everyday work “assistants”. This is precisely where R. Špukienė says the greatest risk lies.

“Employees, in an effort to work faster and more efficiently, are beginning to use publicly available artificial intelligence tools — often without clear guidelines on what is and isn’t permitted. It can seem that uploading an excerpt of a document, or asking to draft a letter or a summary, is a completely harmless action. However, in reality, this means that the organisation’s internal information is transferred to external servers, which are often outside the European Union. At this point, the organisation essentially loses control: it no longer knows where the data is stored, who can access it, or how it might be used in the future,” notes R. Špukienė.

In her opinion, the problem is even greater because such actions are often not visible or controlled at the organisational level.

“The greatest risk is that it happens quietly — not through officially implemented systems, but through individual employee decisions. One person tries something out, another sees that it works, and little by little, a practice takes shape that actually raises some very serious security concerns. This is especially true in the public sector, where personal data, financial information, or even matters of national security are handled; such a grey area is extremely dangerous,” she emphasises.

Risk becomes a matter of national security

Giedrius Karauskas, head of the technology department at Tilde IT, echoes this sentiment, stating that it is important to understand that this problem is not merely technological — it also has a broader, strategic context.

“It is often thought that this is a matter for IT specialists or cybersecurity experts, but in reality, we are talking about a much broader issue — data sovereignty. If an organisation has no control over where its data is located and what happens to it, then it loses some control over its operations. In the public sector, this not only means internal processes but also trust in the state,” states G. Karauskas.

He also presents a specific scenario that helps to better understand the extent of the risk.

“Let us imagine a situation where an employee handling sensitive information — for example, from the Ministry of Defence or a law enforcement agency — uses an external AI tool to process text more quickly or to prepare a response. If the text contains sensitive information, it may be processed and stored outside the organisation. In this case, we are no longer talking about theoretical risk but about a very concrete threat, as the organisation no longer has any real possibility to control what will happen to that data next,” he says.

G. Karauskas highlights an additional problem relating to the fact that most of these tools are “free”.

“It’s important to understand very clearly that if you don’t pay for a product with money, you often pay with your data. The information entered may be used for model improvement or other purposes, and organisations often lack full transparency about how this is done. For businesses, this is already a challenge, and for the public sector — a critical risk,” he emphasises.

Solution — AI that remains within the organisation

“The biggest mistake is to think that we have to choose between innovation and security. In fact, today’s technology makes it possible to have both. The key difference arises when artificial intelligence doesn’toperate somewhere outside the organisation, but within it — on its servers or within a trusted European infrastructure. In such a case, all data remains under the organisation’s control; it doesn’t go anywhere, and we can safely use AI, even with sensitive information,” explains Renata Špukienė.

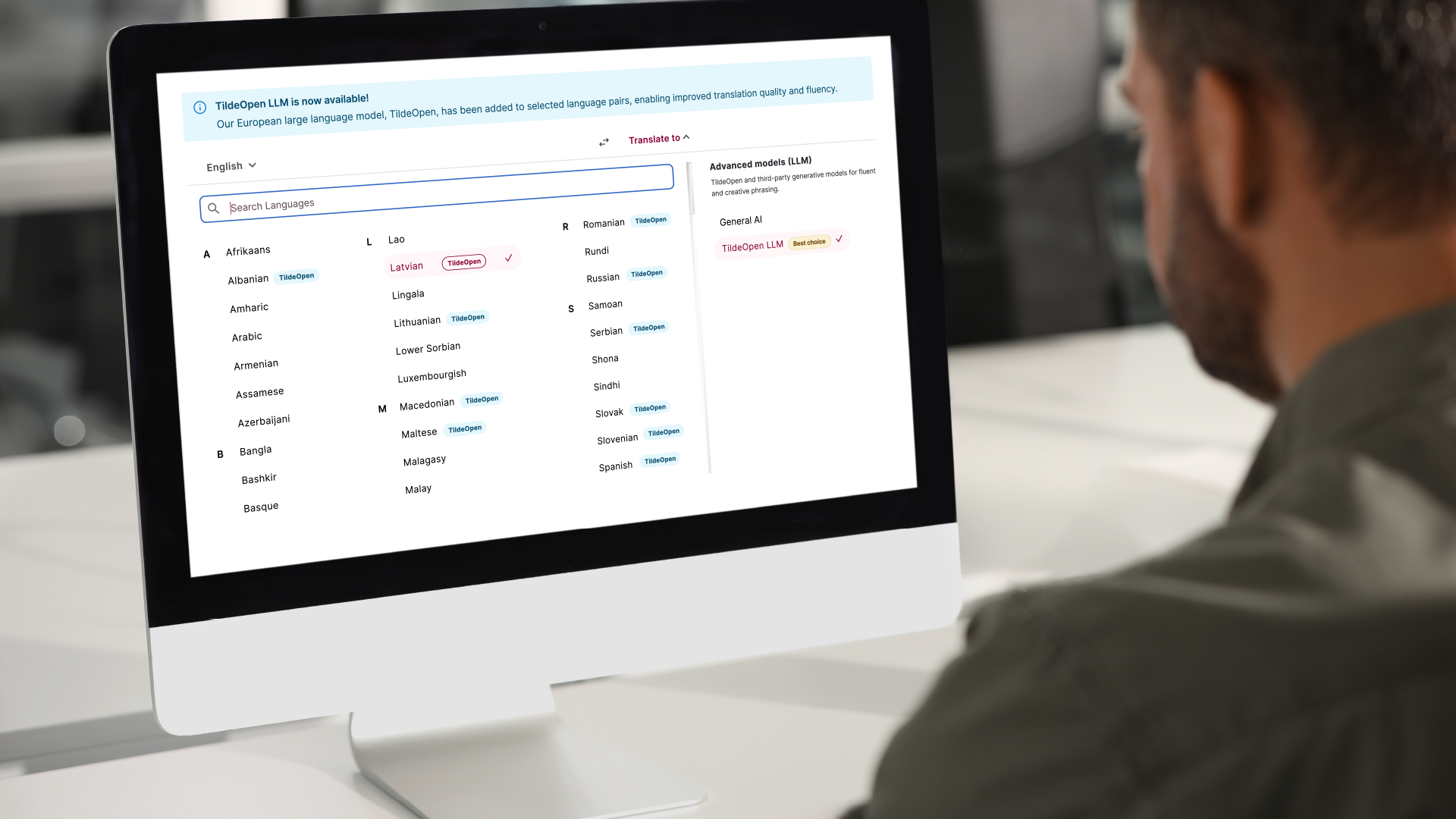

This is precisely the principle implemented by the TildeOpen large language model developed by Tilde.

“It is a foundational model adapted for European languages, including Lithuanian, which can be deployed within an organisation and used as a basis for various solutions. In practice, this means that institutions can create internal AI assistants, automate document processing, analyse data, or manage communication — all without fearing data leaks. This is particularly important for organisations that, until now, have essentially been unable to use AI due to security requirements,” says R. Špukienė.

According to her, Lithuania has taken an important step in this regard.

“We already have our own large language model, which allows not only the use but also the creation of artificial intelligence in Lithuania. The question now is not a technical one, but a strategic one: will we take advantage of this opportunity? This will determine whether we will merely be consumers of global solutions, or become their creators and boost the country’s competitiveness,” concludes R. Špukienė.